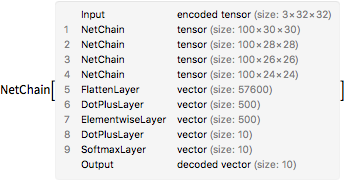

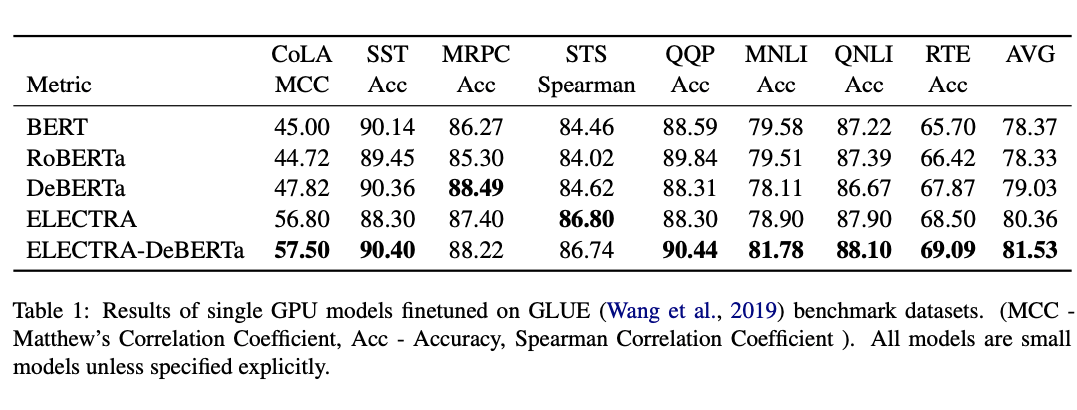

Small-Bench NLP: Benchmark for small single GPU trained models in Natural Language Processing | by Bhuvana Kundumani | Analytics Vidhya | Medium

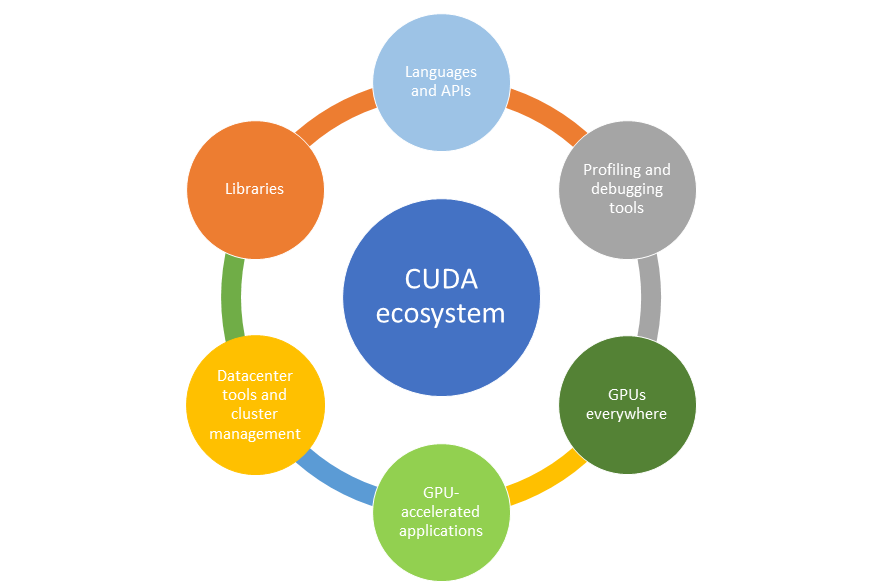

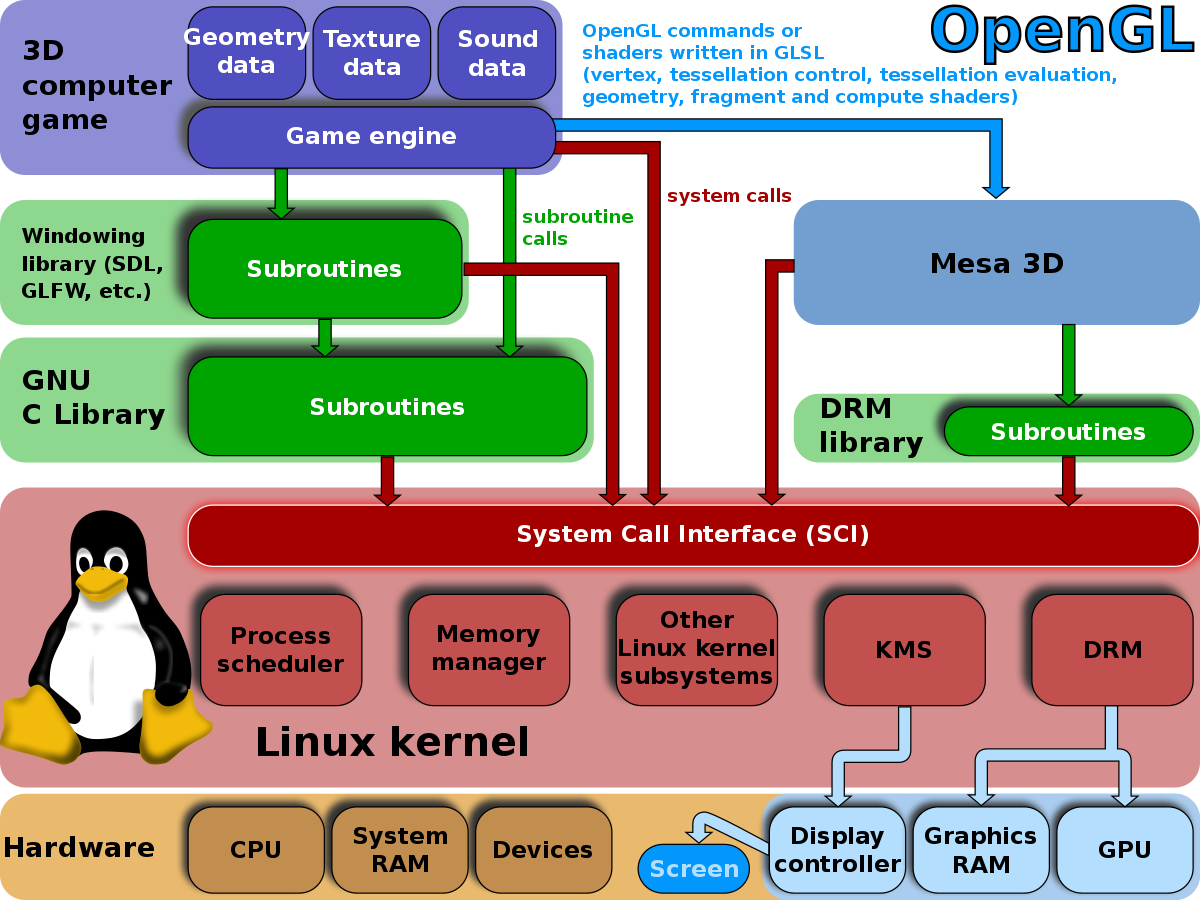

Figure 1 from Graphics processing unit (GPU) programming strategies and trends in GPU computing | Semantic Scholar

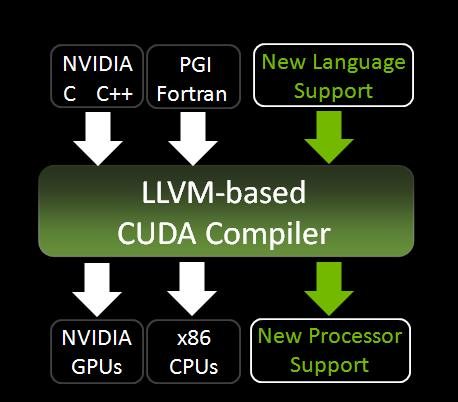

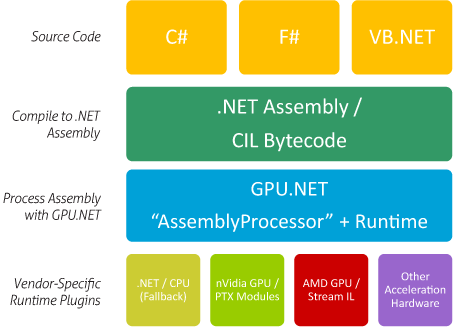

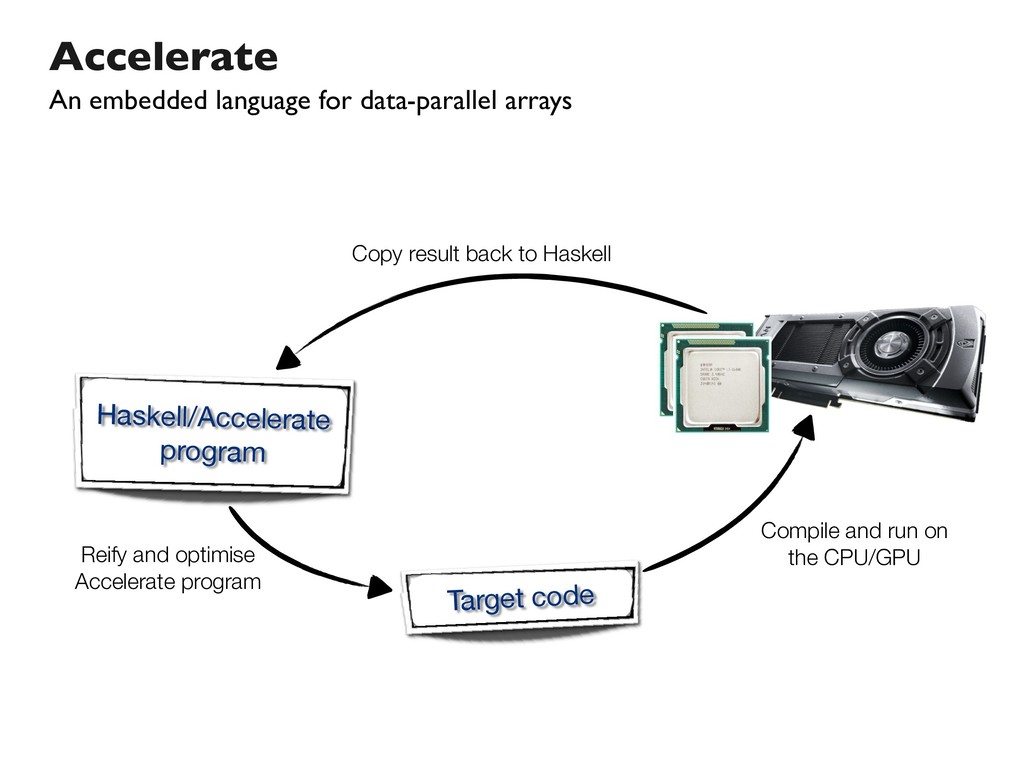

4 Evolution of GPU programming languages. Initially: since 2007 general... | Download Scientific Diagram

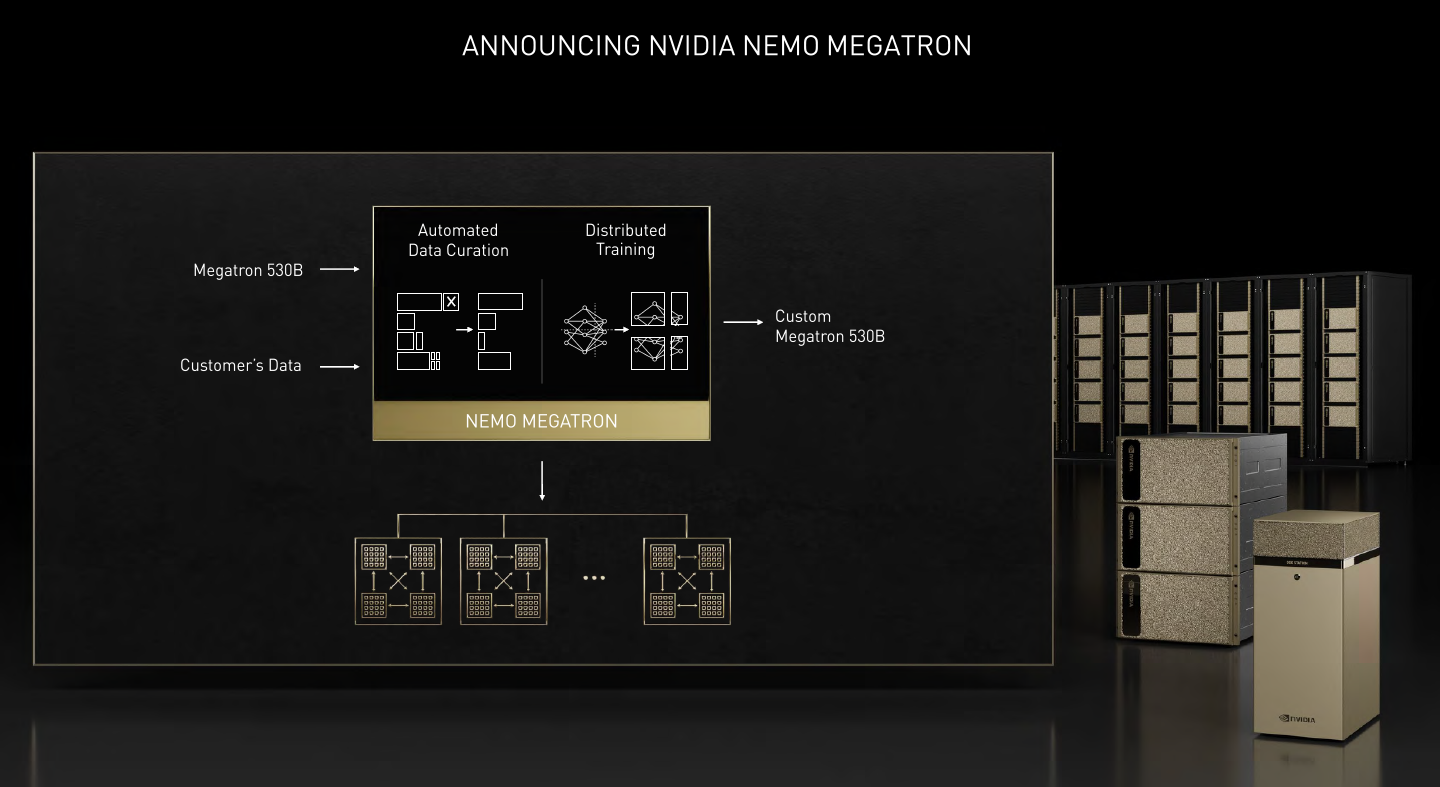

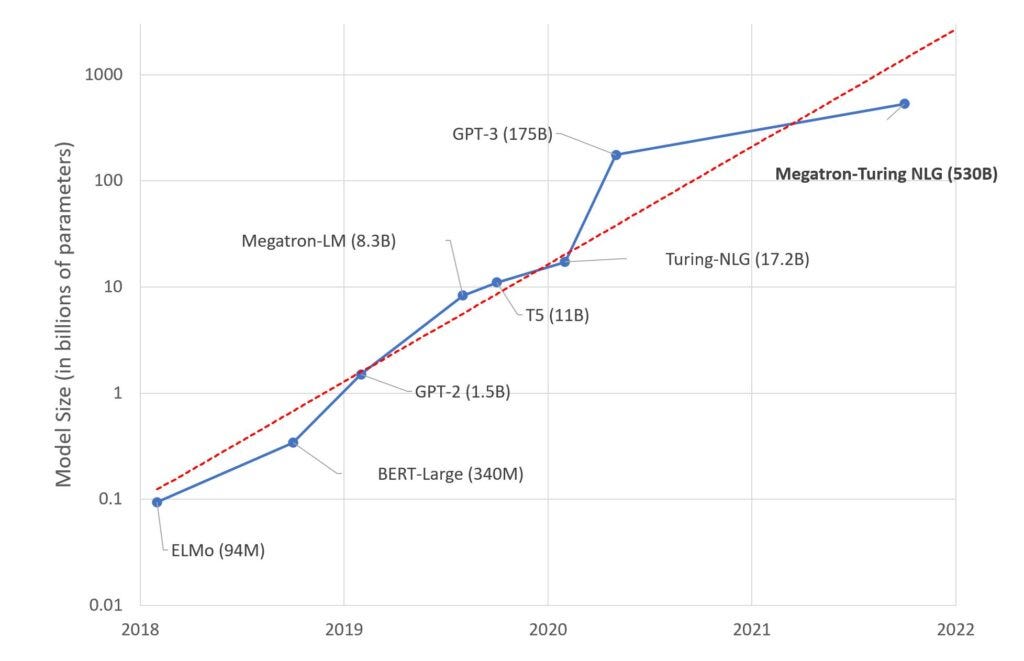

Microsoft and Nvidia create 105-layer, 530 billion parameter language model that needs 280 A100 GPUs, but it's still biased | ZDNet